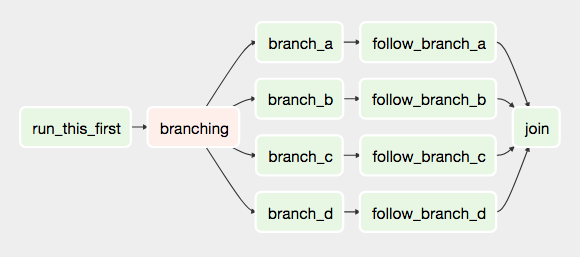

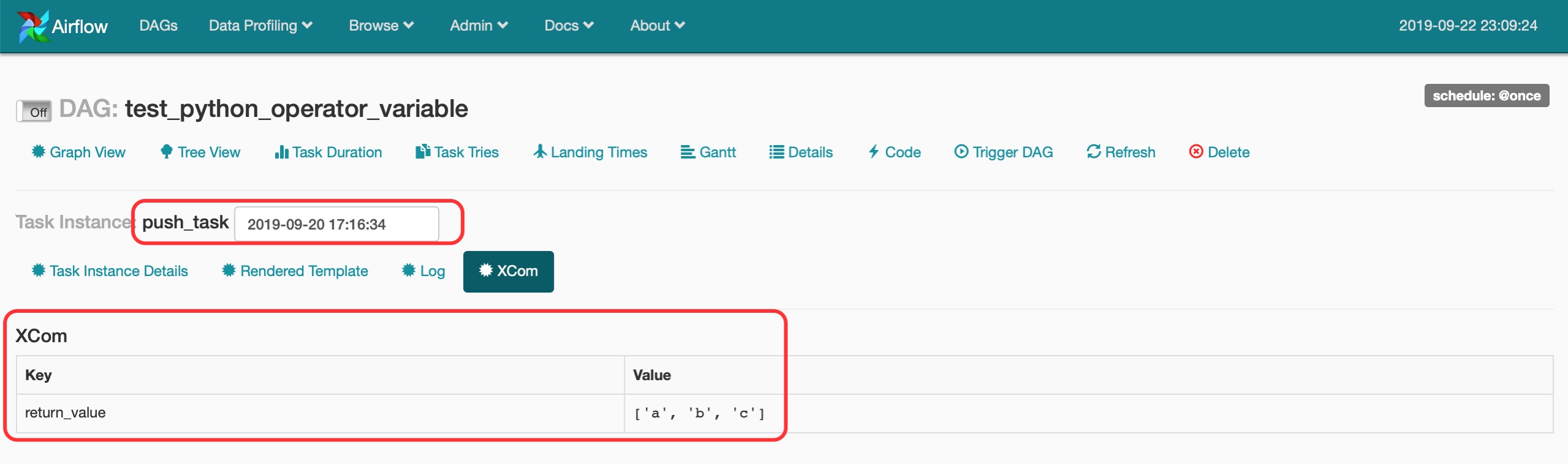

The SQLite database and default configuration for your Airflow deployment are initialized in the airflow directory. In a production Airflow deployment, you would configure Airflow with a standard database. Initialize a SQLite database that Airflow uses to track metadata. Airflow uses the dags directory to store DAG definitions. Install Airflow and the Airflow Databricks provider packages.Ĭreate an airflow/dags directory. Initialize an environment variable named AIRFLOW_HOME set to the path of the airflow directory. This isolation helps reduce unexpected package version mismatches and code dependency collisions. Databricks recommends using a Python virtual environment to isolate package versions and code dependencies to that environment. Use pipenv to create and spawn a Python virtual environment. Pipenv install apache-airflow-providers-databricksĪirflow users create -username admin -firstname -lastname -role Admin -email you copy and run the script above, you perform these steps:Ĭreate a directory named airflow and change into that directory. Pass context about job runs into job tasks.Share information between tasks in a Databricks job.Airflow is an open-source free workflow management tool by Apache that’s probably the best tool out there available.There are two ways of setting dependency. Let’s say we want task t2 to get executed after task t1, then we will send dependency of t2 on t1 (means only after t1 has completed its work then only t2 will run).the order in which we want to execute tasks (based on the DAGs that we are having) After setting up DAGs and tasks we can proceed to set dependencies i.e.“running”, “failed”, “queue”, skipped etc. It is must that we have a state associated with TaskInstances and Dagruns.Tasks that are defined within Dagruns are called TaskInstances.We also have execution_time that starts at start_time (inside the DAG) and keeps executing after certain time according to time interval defined in schedule_interval (inside the DAG).DagRuns can be defined as DAGs that are scheduled to run after certain time intervals like daily/every minute/hourly etc.You must define task_id and dag container when defining a task. One task per operator is the standardized way to write task in Airflow. An Operator when instantiated (assigned to a particular name) is called a task in Airflow.Return x + " is best tool for workflow management"ĭescription='Demo on How to use the Python Operator?', MySqlToHiveTransfer (as the name suggests it will transfer data from MySQL to Hive) Transfers: Transfer data from one place to another.PythonOperator (will helps in executing a function written in Python) Operators: Behaves like a function (will just do a certain task).

HdfsSensor (Hadoop File System Sensor will keep running until it detects a file or folder in HDFS)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed